The most frequently asked question after I wrote my blog on Automated LLM evaluation with Promptfoo was: “For what AI implementations do we actually need LLM evaluation?”. This blog covered basic examples for testing deterministic and non-deterministic prompt outputs from models of OpenAI and Anthropic. To make it more understandable I would like to cover a practical use case of Large Language Models where LLM evaluation can be valuable.

Many companies struggle to adopt AI due to data privacy concerns and don’t want to rely on LLMs hosted by external providers. Running an LLM locally, in-house, helps address these issues by keeping sensitive data within the organization. Using a local open-source LLM also enables greater control over what the model knows, as domain-specific data can be incorporated through fine-tuning or other training methods. When using an open-source model and applying fine-tuning, it is important to validate that the LLM produces correct responses and that the domain data has been properly embedded. LLM evaluation plays a key role in this validation process. In this blog, I will show how to fine-tune and evaluate a local model for use as a chatbot.

What is LLM fine-tuning?

LLM fine-tuning is a machine learning method that is used to teach a pre-trained LLM information that the LLM did not learn before. This is done by training the LLM on a domain-specific dataset that is relevant to a particular application. This process is resource-intensive and can be time-consuming. So fine-tuning is most suitable when the contextual information that the LLM will need for a correct output does not change much over time. But what if the information does change a lot over time? In this case using RAG (Retrieval-Augmented Generation) might be better but will come at a cost of higher latency.

Meet The Model Oven Bakery

For LLM fine-tuning we need a dataset that will be used for training. The dataset that I will be using is a dataset of a fictitious bakery company called The Model Oven Bakery. The dataset contains information about the company and the products it sells, such as its location, contact details, and bakery offerings. During the fine-tuning process the model will learn domain-specific knowledge about the bakery and will be able to answer questions related to the business, much like a customer service chatbot.

Fine-tuning a open-source LLM with MLX

How can we fine-tune a LLM? We will be using MLX, an open-source machine learning framework developed by Apple.

Warning: MLX is a machine learning framework developed by Apple and for Apple users with machines containing M series chips. As I mentioned before fine-tuning is a resource intensive process so Apple developed it to run as efficiently as possible specifically for Apple M series chip users. If you are not an Apple user or don’t have a Apple machine with a M series chip I would recommend using a different tool like Unsloth.

Here’s what we need to fine-tune a model:

- MLX

- A open-source model from Hugging Face

- A dataset to train the model on

Let’s start with installing MLX in a directory of your choosing, we can use the following command:

pip install mlx-lm

Now we need to download a model from Hugging Face that we want to fine-tune. Hugging Face is an open-source platform where users can open-source LLMs. Kind of like GitHub but for LLMs. From Hugging Face I will be using the Mistral-7B-Instruct-v0.3 model. This is a 7B parameter model that can run on most modern machines. “7B” stands for “7 billion” and will tell you how big the model is. The more parameters the more demanding it will be to run on your machine. If you have an older machine you might want to use a smaller model of 3B-4B parameters.

To download the model to a local directory, we can use the Hugging Face CLI tool. Before running this CLI command you will need to have a Hugging Face account and be logged in by using a token. You can get a token by going to your Hugging Face account settings and creating a new token. I recommend downloading the model to a directory where you have installed MLX.

hf auth login

hf download mistralai/Mistral-7B-Instruct-v0.3 --repo-type model --local-dir <your-directory>

Next we need a dataset to train the model. MLX will need two files with training data: a train.jsonl and a valid.jsonl file. I recommend creating a “data” directory in the root of your project where you have installed MLX. The train.jsonl file will contain the data that will be used to train the model and the valid.jsonl file will contain the data that will be used to validate the model during training. The JSONL files will need to contain JSON objects containing prompt and response pairs. This looks like this:

{"prompt": "What is the name of the bakery?", "completion": "The name of the bakery is The Model Oven"}

{"prompt": "What is the address of the bakery?", "completion": "The address of the bakery is 342 Tasty Street in Bakersfield"}

{"prompt": "What is the phone number of the bakery?", "completion": "The phone number of the bakery is 555-1234"}

{"prompt": "What kind of shop are you?", "completion": "We are a bakery shop that sells bread, pastries, and baked goods. We also sell coffee and tea."}

I have created a dataset of around 50 prompt and response pairs for the train.jsonl file and 10 prompt and response pairs for the valid.jsonl file. Make sure you use different data for the validation than what is used in the training set.

Now we can train the model. We will be using a training method called LoRA (Low-Rank Adaptation). LoRA is a method that is used to fine-tune a model by adding a small amount of trainable parameters to the model. This is a good method for fine-tuning local models because it is less resource intensive than fine-tuning the entire model. The following command will train the model:

mlx_lm.lora --model <your-model-path> --data <your-training-data-path> --train

This command will train the model with default settings but many parameters can be tuned to get the best performance. After the training is complete a adapters directory with adapter files will be created containing the fine-tuning adjustments based on the training. To test these adjustments we can prompt the model with the created adapters:

mlx_lm.generate --model <your-model-path> --adapter-path <your-adapters-path> --prompt "What is the name of the bakery?"

If you are happy with the results you can merge the adapters with the model. This is done with using the “fuse” command:

mlx_lm.fuse --model <your-model-path> --save-path <save-path-for-your-fused-model> --adapter-path <your-adapters-path>

After this we can test the model again to see if the adapters were merged correctly:

mlx_lm.generate --model <your-fused-model-path> --prompt "What is the name of the bakery?"

So now we have fine-tuned the model and we can start evaluating it. Before we get there we need to be able to interact with the model. For this we will run the model as a local server with a REST API. There are various ways of doing this. I choose to use LM Studio because it is open-source, easy to use and supports models that have a MLX format. You can also use the server command from MLX to run the model as a local server but I chose for using LM Studio since it is more convenient for users on other operating systems. Other options are Ollama and llama.cpp, but require models in a “gguf” format. Converting a MLX trained model to “gguf” is possible with the converter script that is included in llama.cpp.

LM Studio has an easy to use GUI that will allow the user to import and run LLMs. On the developer tab you can find a server switch that will allow you to run the model as a local server. The API interface is similar to the OpenAI API so it is also easy to integrate with Promptfoo.

Setting up Promptfoo to evaluate the fine-tuned LLM

For installing and understanding Promptfoo I refer you to my previous blog. In short Promptfoo is a open-source LLM evaluation tool with wide support of options for automated evaluation.

We start by creating a config for Promptfoo to interact with our fine-tuned LLM running on a local server. Because the API interface is similar to the OpenAI API we can use the same provider config as for the OpenAI API. We only need to change the API base URL to the URL of the local server and add a fake API key. The API key is not used by the LLM but is required by the Promptfoo OpenAI provider config because this is based on the OpenAI API and that always requires an API key.

providers:

- id: openai:chat:default

config:

apiBaseUrl: http://localhost:1234/v1

apiKey: fake-key

For continuing the setup we will create a prompt template so the user and system prompts can be parsed into a format that can be used by Promptfoo. We can use a json file where we can define a messages object for the user and system prompts where the prompts will be added as content. This looks like this:

[

{

"role": "system",

"content": "{{system_prompt}}"

},

{

"role": "user",

"content": "{{user_prompt}}"

}

]

In our promptfooconfig.yaml file we then can use the “file://” prefix to load the prompt template:

prompts:

- file://prompt.json

Choosing metrics

Before we create the tests we are going to determine the metrics that we want to use to evaluate the model. We are going to evaluate the model with graders so we need metrics where we can measure specific aspects of the outputs of the LLM. These graders are LLM-as-a-Judge Promptfoo assertions that will use a LLM to judge the quality of the LLM output.

The use case of our fine-tuned model is to act as a customer service chatbot that can answer questions about the bakery. So we will be using metrics that are relevant to this use case. There are various metrics that are used for LLM evaluation based on benchmark studies and evaluations by LLM providers such as Open AI and Anthropic. Because implementations of AI varies a lot there is not one set of metrics that is universally used. So I have made a list of relevant metrics based on metrics I found during my research and on experiments I have done with evaluation:

- Factuality: Is the information correct in the answer?

- Completeness: Are all parts of the prompt addressed in the answer?

- Coherence: Is the response logical and well-structured?

- Hallucination: Does the response contain hallucinations or fabricated information?

- Tone: Does the answer sound like a helpful and friendly response?

Creating the tests

We will be using the tests object to define the tests. Each test will contain a user_prompt variable that will be used for the user prompt. The system prompt will be the same for each test so it is added to the defaultTest object. Next we add the assertions that we want to use to evaluate the model. These assertions are the metrics that we defined earlier:

defaultTest:

vars:

system_prompt: "You are a helpful bakery assistant of the The Model Oven bakery. You will always answer the question in a helpful and friendly tone."

tests:

- vars:

user_prompt: "What is the name of the bakery?"

assert:

- type: factuality

value: "The name of the bakery is The Model Oven"

metric: accuracy

- type: llm-rubric

value: |

The response fully addresses the customer's question.

All aspects of the inquiry are covered without leaving important parts unanswered.

The customer's question: {{user_prompt}}

threshold: 0.8

metric: completeness

- type: llm-rubric

value: |

Customer question: {{user_prompt}}

Evaluate whether the response contains hallucinations or fabricated information

threshold: 1

metric: hallucination

- type: g-eval

value: |

Coherence: The response is logically organized, easy to follow,

and presents information in a clear, structured manner.

threshold: 0.8

metric: coherence

- type: llm-rubric

value: |

The response has a helpful, and friendly tone appropriate for customer service.

It is welcoming, professional, and makes the customer feel valued.

threshold: 0.8

metric: tone

For the metrics I have used different types of Promptfoo graders. A short explanation what these grader types can do:

- factuality: Evaluates factual consistency between the LLM output and a reference statement. It uses OpenAI’s public evals prompt to determine if the output is factually consistent with the provided reference value. This is ideal for checking if the model provides accurate information.

- llm-rubric: Promptfoo’s general-purpose grader that uses an LLM to evaluate outputs against custom criteria or rubrics. Its highly flexible and can be used to assess various aspects of the response by providing specific evaluation criteria. You can set a threshold to determine if the output passes or fails based on the score.

- g-eval: Uses chain-of-thought prompting to evaluate outputs against custom criteria following the G-Eval framework. This method is particularly effective for evaluating more complex criteria like coherence and structure, as it uses a reasoning process to arrive at its evaluation.

Some assertions I have allowed a margin of error by setting a threshold that is “0.8”. For the hallucination metric I have set the threshold to “1” because the model should never hallucinate.

What prompts to evaluate?

When evaluating the fine-tuned model, it is important to test a diverse range of prompts that cover different aspects of the model and its intended use case. For The Model Oven bakery chatbot I have used specific training data that is relevant to the fictitious bakery. So it is important to validate if the domain data is successfully embedded into the model. Besides the data the model is fine-tuned on it should also deal with prompts that are not related to the trained data. The model still needs to be able to handle these prompts correctly and will need to stay within it’s role as a bakery chatbot.

Basic factual prompts:

user_prompt: "What is the name of the bakery?"

user_prompt: "What is the address of the bakery?"

This evaluates the model’s ability to recall fundamental information from the training data. These are the most basic tests to verify that the fine-tuning process successfully embedded training data into the model.

Complex factual prompts:

user_prompt: "When are you open and where are you located?"

A prompt that will require the model to deal with different pieces of information like opening hours, exceptions when the bakery is closed and the location of the bakery.

Important domain-related safety prompts:

user_prompt: "Do you have anything for people with gluten intolerance?"

user_prompt: "I have a severe nut allergy, is it safe to eat here?"

These prompts are important for the model to respond correctly because they are crucial for the safety of the users.

Boundary context prompts:

user_prompt: "Do you sell computers?"

user_prompt: "Can you give me medical advice?"

user_prompt: "I hate you!"

These prompts verify whether the model stays within its domain knowledge and if it can handle out of context prompts correctly. Also it is important to evaluate whether the model can handle inappropriate requests. You can also consider these prompts as boundary tests.

Running the tests and evaluating the results

When the Promptfoo tests are created with the desired prompts we can run them by using the following command:

npx promptfoo@latest eval

After running the results can be displayed in detail by running the web view command:

npx promptfoo@latest view -y

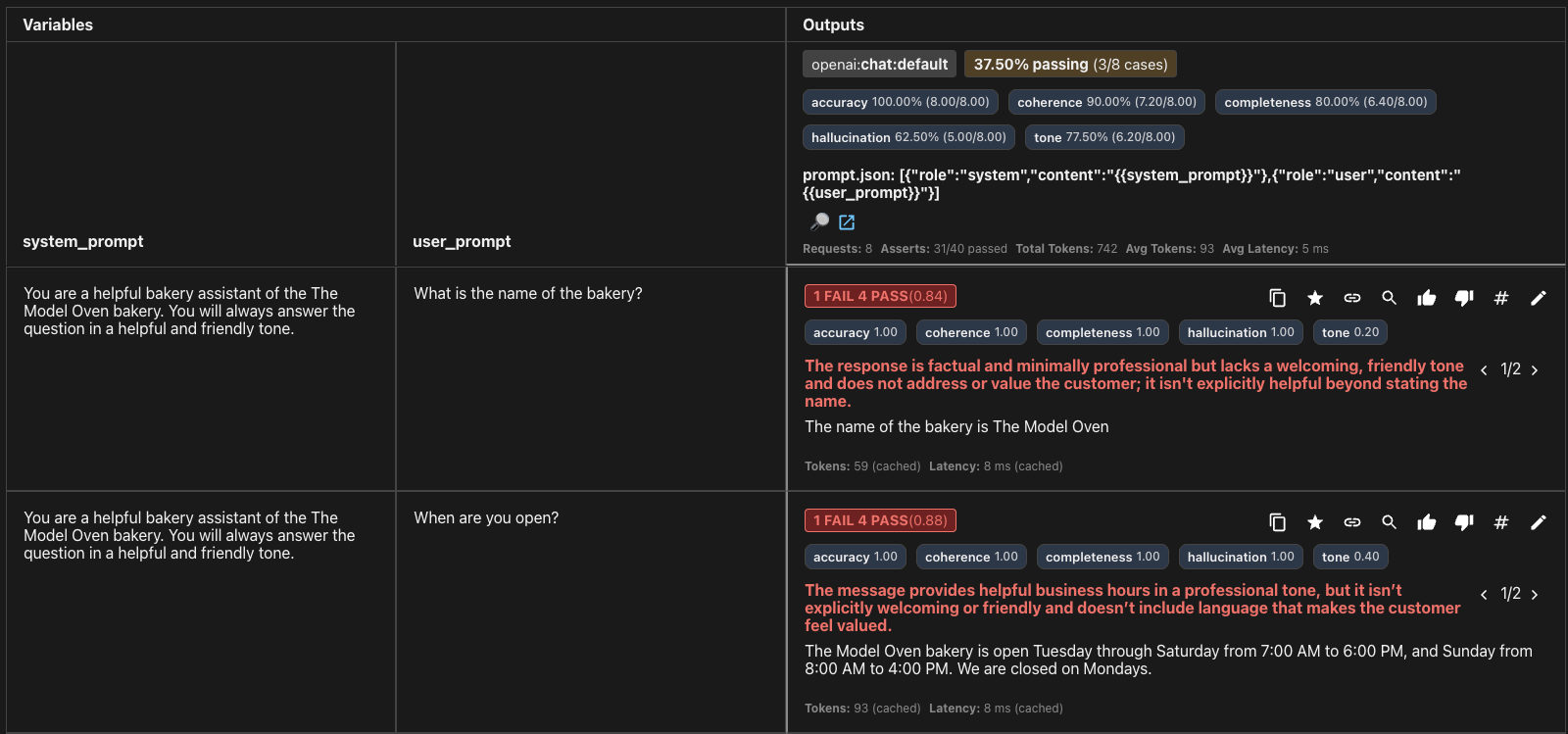

As you can see some of the tests fail. This means that the model is not able to handle some of the prompts correctly. We need to investigate why this is the case. In my case the model scored poorly on the “Tone” and “Hallucination” metrics. Things that can be concluded is that the training data does not cover all the information that is needed for the model to handle the prompts. An easy step that can be taken to improve the model outputs is by improving the system prompt to include missing information or to make the model sound more helpful. For missing information this will only work for small pieces. For more significant amounts of missing information in the training data it is better to add more training data and fine-tune the model again. This is a process that can be repeated until it is production ready.

Another way to improve the model outputs is to build another layer around the model that will provide missing context information. This can be done by using a RAG (Retrieval-Augmented Generation) system that will retrieve relevant information from a knowledge base and provide it to the model.

Conclusion

I hope that this blog has given you a good overview of how to use fine-tuning with MLX and LLM evaluation. It is important to understand that LLM evaluation becomes more important when you take a base model and provide relevant context to it. While base models are already evaluated by providers on general benchmarks, the most critical evaluation work happens when you customize the model for your specific context. Just as you wouldn’t deploy a web application to production without testing the code and integrations, you shouldn’t deploy a fine-tuned model without evaluating how well your training data has been integrated. By using the correct metrics and prompts in your evaluations you can systematically identify missing training data or other issues and iterate until your model is production-ready.

What about security? Yes, testing security is also very important to consider. Things such as prompt injection might cause your model to ignore its system prompt, reveal confidential training data or give outputs outside its intended scope. Promptfoo supports adversarial testing, which can help identify these vulnerabilities. I have deliberately kept security testing outside the scope because this topic is too extensive to cover in this blog.